v6.2.0 (E8.2) = Production Model - 74x Improvement!

E8 trained with optimized shard-based approach: 46 shards × 3 epochs on 68K images.

mAP50: 0.145% (4.6x better than E2 baseline) · Recall: 4.2% · Full report →

Artifactiq Schema: 39 Domain-Specific Classes

Artifactiq uses OpenImages-based 39-class schema for fashion/merchandise detection:

• Apparel (8): Clothing, Dress, Suit, Jacket, Coat, Jeans, Shorts, Skirt

• Footwear (4): Footwear, Boot, High heels, Sandal

• Accessories (7): Watch, Sunglasses, Hat, Tie, Belt, Scarf, Glasses

• Bags (5): Backpack, Handbag, Suitcase, Briefcase, Luggage and bags

Stock YOLOv8n (COCO) has limited overlap with these fashion classes.

Stock YOLOv8n (COCO)

80 generic classes · Fully trained

Has: person, backpack, car, bench, chair, handbag, cup...

Missing: Clothing, Shorts, Dress, Jacket, Footwear

Artifactiq v6.2.0 E8.2 (ONNX)

39 domain classes · 46 shards × 3 epochs

Has: Clothing, Shorts, Dress, Jacket, Footwear, Boot, Backpack, Handbag, Sunglasses, Hat, Watch...

74x mAP50 improvement over baseline

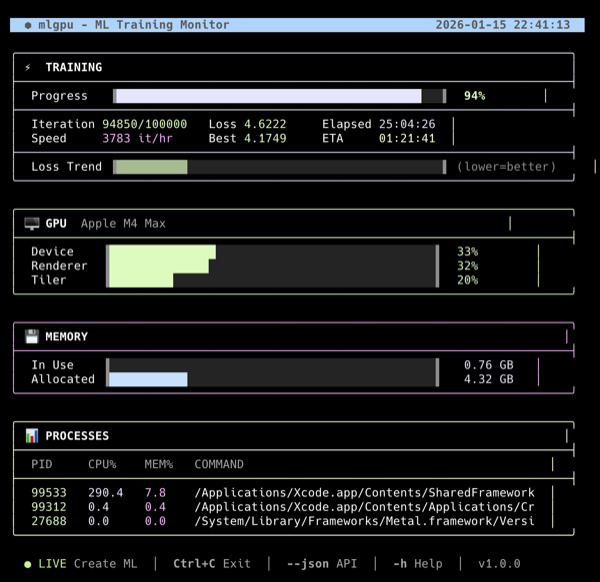

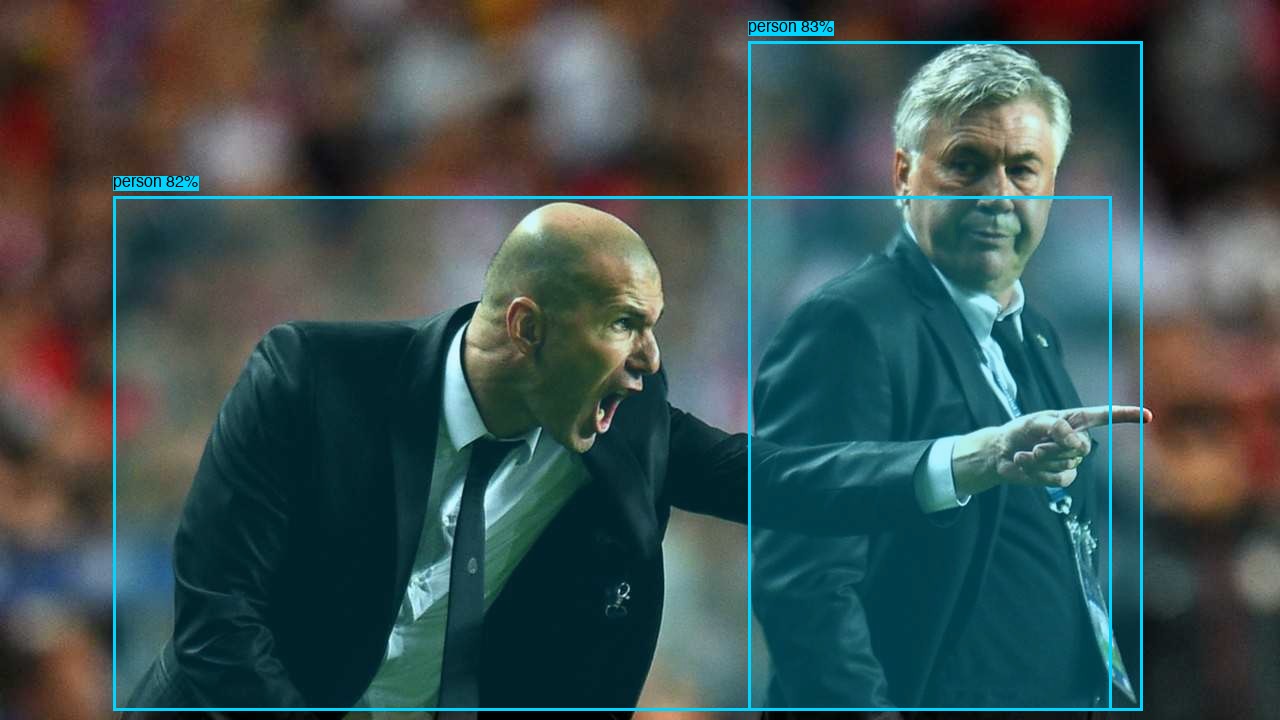

Batch test: 21 images · Stock: general objects · v6.2.0: domain-specific detection · 81ms avg

detected_bus.jpg

Stock YOLOv8n (5 det)

person 89.3%

person 88.1%

bus 83.8%

v6.2.0 E8.2

39-class domain model

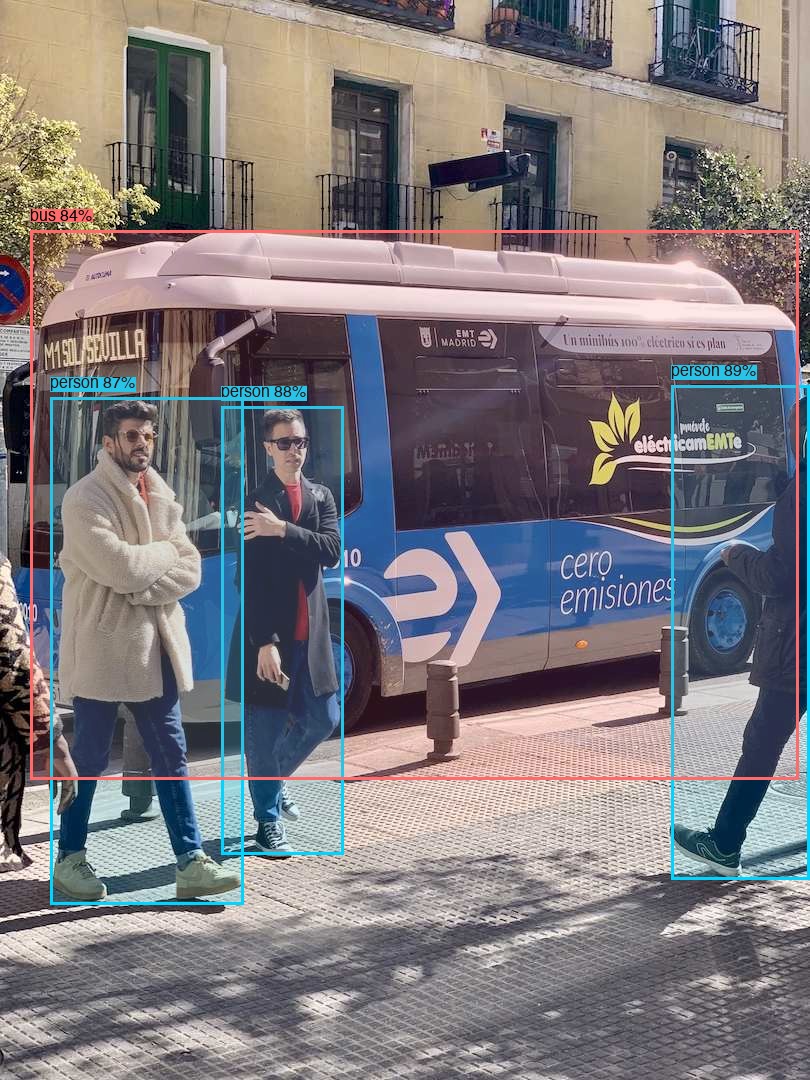

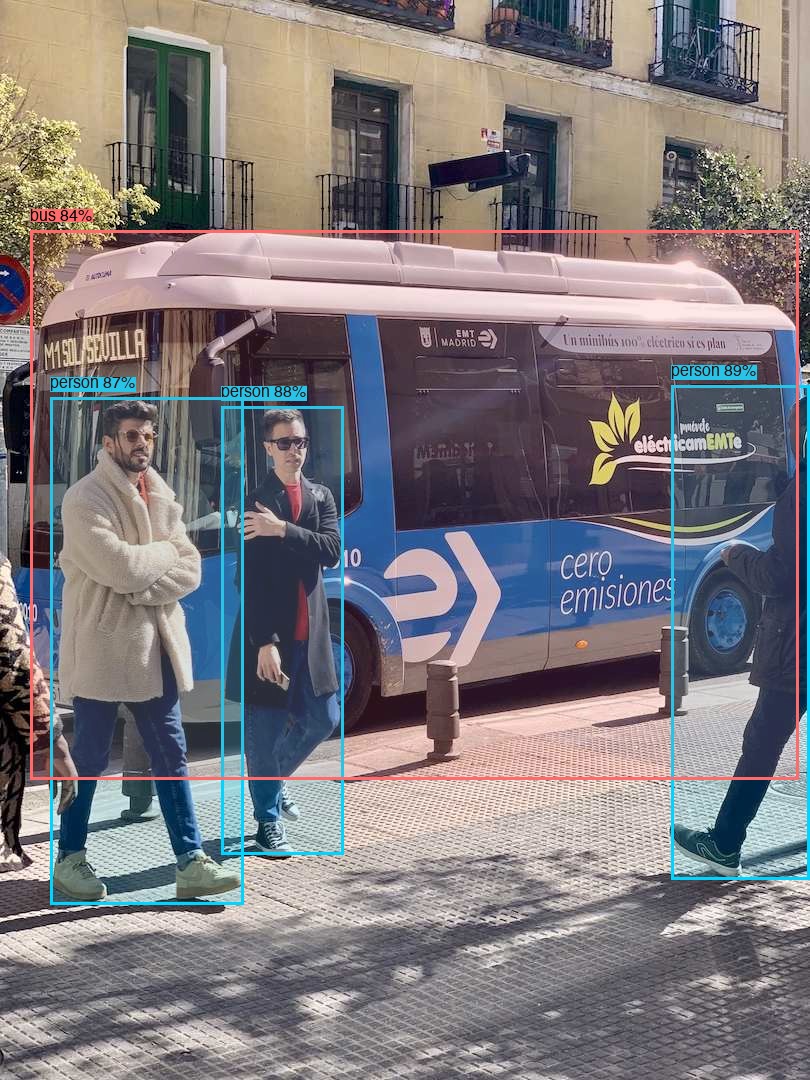

detected_zidane.jpg

Stock YOLOv8n (7 det)

person 82.7%

person 82.1%

person 76.3%

v6.2.0 E8.2

39-class domain model

detected_backpacker.jpg

Stock YOLOv8n (1 det)

person 85.2%

v6.2.0 E8.2

39-class domain model

Footwear 5.3%

angkor-ruins.webp CLOTHING DETECTED

Stock YOLOv8n (8 det)

person 85.5%

backpack 45.5%

car 9.7%

v6.2.0 E8.2

Person 9.9%

Clothing 8.6%

lake-trekking.webp landscape

Stock YOLOv8n (8 det)

cat 35.4%

cat 21.9%

person 14.6%

v6.2.0 E8.2

No products @ 5%

temple-explorer.webp

Stock YOLOv8n (3 det)

person 28.6%

handbag 16.6%

v6.2.0 E8.2

No products @ 5%

ocean-balcony.webp no people

Stock YOLOv8n (13 det)

bench 75.2%

chair 44%

chair 26%

binoculars-mountain.webp no people

Stock YOLOv8n (2 det)

fire hydrant 81.6%

bench 6.5%

beach-accessories.webp no people

Stock YOLOv8n (3 det)

bird 11.1%

handbag 10.5%

v6.2.0 E8.2

Hat/Sunglasses <5%

beach-scene.webp no people

Stock YOLOv8n (6 det)

bench 27.3%

bench 23.7%

cup 12.6%

# Model comparison test (conf=0.05):

artifactiq analyze --input ./images/ --model yolov8n # Stock COCO model

artifactiq analyze --input ./images/ --model artifactiq:v6.2.0 # Domain-specific model

() is the default model with . Domain-specific 39-class detection for fashion/merchandise. Training report →